- SAP Community

- Products and Technology

- Additional Blogs by Members

- The Generic BI Frontend Tool Selection Process

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

(this blog has first been published on http://rbranger.wordpress.com/)

Finally - I'm blogging again. Time flew by and "my life as a BI consultant" kept me busy with migrations from Oracle to Teradata or from BO XI 3.1 SP2 to SP6. Or maybe you've also heard about our BusinessObjects Arbeitskreis (can be translated as "workshop"), I'd call this the "only BOBJ dedicated conference in Europe": www.boak.ch It was a pleasure to build and execute an interesting agenda for our participants as well as welcoming great people like Jason Rose, Mani Srini, Saurabh Abhyankar, Mico Yuk or Carsten Bange. I also had the pleasure to do the closing key note during BOAK. It was around BOBJ Frontend Tool Selection. I used this opportunity to further develop a generic yet simple method how to approach the frontend tool selection. The basic idea I formulated already in my last blog post back in April 2013. But I agree with some of the comment writers that this first rule of thumb was maybe to specific to be applied in all situations. Therefore let me share what I think is a more generic approach - by the way you can use this of course for other BI vendors and not only SAP BusinessObjects (the following illustrations are just examples - the listed and selected tools don't have any concrete meaning!):

PART A: Preparation

Step 1: List all available BI frontends

The first thing to do is to get an overview what BI frontend tools are available in general from a specific BI vendor. As I'm a big fan of working interactively with people, e.g. gathering in front of a whiteboard or flipchart, I suggest you write down product names to sticky notes and post them on the flipchart:

Step 2: Devide tools into "in scope" and "out of scope"

Depending on your environments you can do a first, yet very rough tool selection and devide the intially listed tools (see step 1) into two groups:

"Out of Scope": This is maybe easier to start with: If you don't have SAP BW as a source, you can eliminate all tools working with BW only. Or if your security policy prohibits the use of Flash, maybe Explorer or Xcelsius are out of scope a priori.

"In scope": All the tools which are not out of scope.

PART B: Build a working hypothesis

Step 3: Select the tool which covers most of your requirements

This step assumes that you have quite a clear understanding of your business needs to be solved with a BI solution. I'm fully aware that this is often not the case. But to keep the basic process for tool selection as simple as possible I won't go into details about how to find the "right" requirements. Not yet, but maybe in a further blog post.

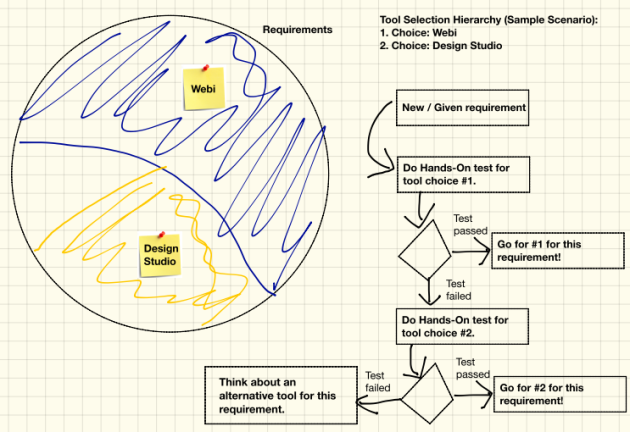

Anyway, let's represent the total amount of requirements symbolically as a circle. Now think about which tool has the broadest coverage of your requirements. Take the sticky note and put it onto the circle. Please be aware that this is only a "working hypothesis" - trust your gut feeling - you can always revise your tool choice later on in the process.

Step 4: Select the tool which covers most of your left over requirements

Repeat step 3 and think about the tool which might cover most of your left over requirements and put the corresponding sticky note into the circle.

PART C: Validate your working hypothesis

Nothing is more annoying than "strategies" which exist only on paper but cannot be transformed into reality. Keep in mind that you've just built what I call a working hypothesis. Now you should validate it and test against the reality. It will either prove your gut feeling regarding tool selection was right or wrong.

So far you have selected two tools. They represent a selection hierarchy. For any given or new requirement (or group of requirements) you should now do a hands-on test. Always start with the first chosen tool: How well can you implement the requirement? Does the implementation fullfil your expectations? What do your end users think about it? Do they like it? For now I leave it up to you to define the "success criteria" to decide in which case a prototypic implementation passes the hands-on test and when not. Anyway, if the implementation passes the hands-on test, you should go with tool #1 for this kind of requirement now and in future situations.

If the implementation fails the hands-on test for tool #1, go forward to tool #2 and do a hands-on test again with this one. Hopefully your prototypic implementation now passes the test and you can define to go with tool #2 for this kind of requirement now and in future situations.

What happens if a prototypic implementation fails the second hands-on test too? There are three alternatives:

- If you fail the second hands-on test for let's say <10% of requirements, you should think about a specific solution for these obviously very special requirements: Mabye you simply continue to solve these requirements "manually" in Excel? Maybe you need to buy a niche tool for it? Just find a pragmatic solution case wise.

- If you fail the second hands-on test for let's say <30% of requirements, you should think about adding a third tool to your tool selection hierarchy.

- If you fail the second hands-on test for let's say <60% of requirements, you should definitely revise your working hypothesis and play through another tool selection hierarchy.

Closing Notes

I'm fully aware that the outlined process is simplistic. That's why you might not be able to use it "as is" in your current frontend tool selection project. But it shows the basic idea (namely to build a tool selection hierarchy and validate it with hands-on tests) on how to narrow down the number of useful tools in a given context - and it is your job to apply respectively adapt it to your environment. Let me know what you think about it - and how it works in your environment!

- Quick & Easy Datasphere - When to use Data Flow, Transformation Flow, SQL View? in Technology Blogs by Members

- SAP Sustainability for Financial Services - Portfolio and Solutions in Financial Management Blogs by SAP

- SAP Business Network for Logistics 2404 Release – What’s New? in Supply Chain Management Blogs by SAP

- SAP Build Process Automation Pre-built content for Finance Use cases in Technology Blogs by SAP

- Advance Return Management complete configuration(SAP ARM) in Enterprise Resource Planning Blogs by Members